Knowing How Knowledge Differs From Information Is Good Info

Although information lies at the root of knowledge, it’s important to distinguish between the two; the subtle differences may be missed by, or appear to be negligible to, a layperson, but each are important concepts in KM systems, representing distinct phases of KM processes.

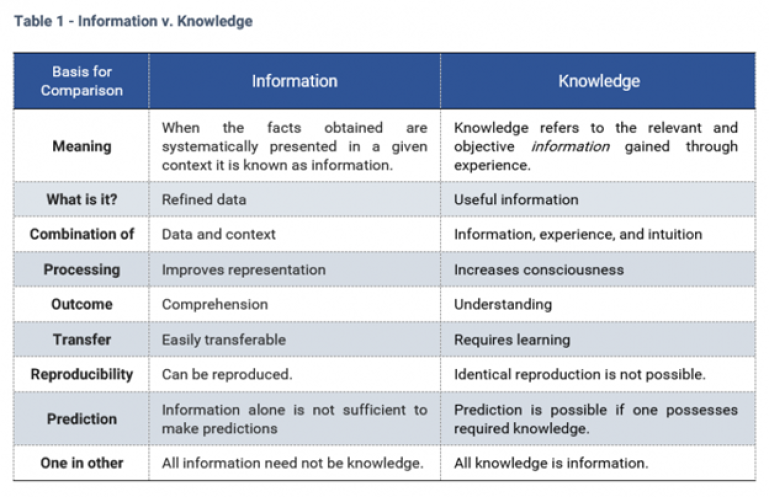

Table 1 identifies several differences that matter to KM, but, in short, Information is organized data that’s been processed, whereas Knowledge refers to links and patterns that, through education and experience, can be drawn between information sources, thus adding usefulness to mere particulars.

Of interest: The currently held scientific view on Intelligence describes it as the ability to extract patterns, and to conflate them into novel ones as well as transpose effective elements unto other areas.

The confusion that surrounds the proper usage of these terms is understandable, more so as both now commonly act as mass nouns, and even more so when you add the goofiness that data seems to produce. I’m ‘old school’ (Latin style), so I use “datum” for the singular, but most now use “data” as a mass noun that has no singular form while a good portion of the U.S. uses “data” as a singular and “datums” as the plural…

So be it, as long as we’re all clear on what is meant when each is used internally. A lack of emphasis having been placed on this matter explains why vague manuals and useless FAQs are mislabeled as “Knowledge Base” when what they truly are is a crappy informational effort.

Information

Information is everywhere, in all sorts of forms, but until it’s been captured and stored in a manner that converts abstraction into datum and data into organizational assets, talking about information is like talking about all the world’s trees when, really, one ought to be focusing on just a few varieties regrouped into one type of forest.

Seeing the forest from the trees, and vice versa, isn’t always easy, which is why those who are keenly aware of this fact in relation to this topic lean on the far opposite side of popular tendencies—for them, all information matters! Maybe just not right now or in ways that are yet evident, but if we can capture that data now, easily and cheaply, isn’t better do to so then at the time when we realize that that data would be useful, if only we’d have it?

As entry #2 of PDL’s Ten KM Principles states:

When talking KB, the phrase “too much information” is equivalent to saying, “frakuitchi bou satphentak,” i.e. it makes no fu%&#$@ sense!

I’m willing to bet that most of you felt some urge, even if faint, to argue this point upon reading it. After all, that phrase spawned an idiomatic pejorative, somethings are better left unknown, and too much of anything is always said to be bad.

But, now that you’ve gained some awareness of the differences existing between the two, especially when applying these terms in a KM context, and knowing that one is required for the other to exist, is that urge still there?

A few years ago, I did some computational linguistics work for a software company specializing in text-extraction, codification, and analysis, as well as sentiment analysis (semantic-based analysis of brand- or market-related consumer comments made across the Web). This type of product was gaining much ground since the early noughties due to the major leaps made in regard to data and connectivity, which helped fuel the Big Data bug, and vice versa.

Interestingly, the sudden, wide open access to data and the possible methods of capturing it created a deluge of information that overwhelmed many companies, triggering a somewhat farcical phenomenon wherein, within five years after Big Data became a mainstream focus, corporate decision-makers were now paralysed, unable to make decisions under a flood of unruly data that rendered inconclusive results, either by returning no feasible interpretation or far too many promising ones.

This created a need! Adding whimsy to farcical, as none of the software companies could address the problem they had given birth to, a ‘nobody’ company based in Waterloo, Ont., was quick to seize it; they created software to manage all the data generated by the software that were marketed as data solutions, automating the many required processes and IT back-end skills out of the informational mess made by these new tools. In no time at all, they made so much money they were able to buy out the already highly-lucrative “data enabler” I’d worked for, and a few other key players, too.

If you think the above illustrates and reinforces any support towards any “too much info” stance, read again. The ‘paralytic’ phenomenon mentioned above is actually the by-product of two phenomena, which explains why a junior company that created a management solution to in-demand software made by companies several development and market cycles ahead of it could, nonetheless, in a fraction of the time, buy out a bulk of the organizations that gave them their very raison d’être.

This proves that, in actuality, despite having strengthened popular, anecdotally-backed notions re this expression, no one spending company data-budgeted dollars really believed that “too much information” was the culprit—clearly, the problem was ‘management know-how’, for absolutely no trend emerged out of the then chaos based on any strategy with a goal like, “Let’s reduce our information!”

Returning to those two phenomena:

Everything-AND-the-kitchen-sink: Some principles remain the same—and will always do so—but the face of Data Science today has changed so drastically from the one of just two decades ago that no one can be blamed for thinking that this is an entirely new field. In fact, advancements have simply forged a new specialty out of an old discipline by marrying statistical approaches to IT data methods, while requirements and expectations translated into most Data Scientists having emerged out of the Computer Sciences. For these, what mattered was the wow-and-wonder of what was now accessible and doable, so, instead of focusing on asking the right questions and limiting analysis to subsequently pertinent info only, everything and the kitchen sink was fed into any problem-targeting reports or insight discovery efforts. The results bespoke awesome processing capabilities that excited techno nerds while failing to satisfy actual demands by managing to overwhelm and confuse business heads, analysts, and archivists.

The unpredictability of actions or of their consequences: Until machines are fully conceived and built by other machines without any human intervention whatsoever, everything, even machines, offers a degree of unpredictability; the common denominator in this equation is “human”. It goes without saying that it’s impossible to predict the consequences of actions which can’t be predicted, but nor, on certain scales, are we able to predict the consequences of known actions with anything that remotely qualifies as “consistent accuracy”, and, apparently, the issues that were to rear themselves through the early Web-based informational/data solutions that were created and marketed and how they were being applied across the corporate landscape lie firmly among these.

The result, on the surface, may have looked like a logical argument for there being such as thing as “too much information” within a learning/intelligence framework—and some companies did buy into this belief to eventually face worse problems—but not knowing how to handle something and when one should be using that something doesn’t necessarily make that something the problem.

That being said, it’s important to establish another distinction, which is: In the end, having it and not needing it is always better than not having it at all, and that’s doubly true when the cost of producing information is equivalent or cheaper than not producing it.

The latter is usually always true if no important technological investment is required, as it implies having a well-defined information structure and workflow and a relevant degree of discipline and of a higher immediate efficacy, all of which tend to lead to long-term cost-saving pluses in most any organization.

This distinction establishes a need to set applicable boundaries. Recording the length of the big toes of each TTM is, I’m certain of it, a nice bit of information to have. With sufficient data points spanning several years, there’s no doubt that interesting correlations could be unearthed, examined, and validated and that this would surely affect our hiring protocols. And, I’m certain there are, at our disposal, several means of obtaining the info without TTMs even being aware that we’re measuring their big toes, and several firms to do it for us. But, despite the potential power that may one day be wielded by those who possess big toe info—only to regret not having decided way-back-when to record big toe girth as well—the chances of this versus all the costs involved to capture that datum for each employee—never mind human rights issues--mean that no one is measuring any big toes, either before or after hiring someone.

But what about capturing all that, being set within established procedures, leaves a clear trace throughout the course of all our activities in a manner that gives every single captured element worth, whether immediate or potential, and we could easily add to that the capture of dizzying amounts of “it’d be nice to know” data with no extra effort at all?

Not knowing how to extract oil confirmed to be under one’s land doesn’t diminish its value. These days, no fooling, information is way more valuable than oil, and we don’t even have to do any prospecting; with the number of transactions we achieve in any single day, we’re sitting on a gold-lined oil reserve.

Note that in this context, such as when speaking of transactional databases, the term “transaction” does not refer specifically to a purchase/payment operation, as per the popular interpretation. Rather, a transaction is any instance wherein an exchange occurs between two or more elements united by a common purpose. For example, pulling info from a DB to modify it and then commit the edits to the DB consists of at least two transactions, and three in some cases. Likewise, a call from a client can be seen as one major transaction which consists of many sub-transactions.

Since information is best captured in the moment and re-creation, when still possible, rarely offers accuracy, and considering that each transaction presents an opportunity to create information and that we can capture much of it for immediate or future use simply by modifying and standardizing certain aspects to accomplish tasks that need to get done one way or another, doesn’t it seem silly to say, “No thanks, we prefer achieving the same thing, but in a manner that generates far less information, for we wouldn’t want to have too much of it.”

Knowledge

As in many fields, varying approaches and perspectives frame Knowledge Management. The divergence is, in great part, due to the broad range of interpretations given to knowledge, and the different attributes one is likely to focus on if targeting specific field-related aspects of KM, either for research or development and integration.

The strategy adopted for GJM takes on a non-restrictive perspective of knowledge, for the entire enterprise is geared towards users and a usage that’s set within clear bounds, not towards gaining an academic understanding or product R&D.

As such, three different aspects of knowledge are considered at all times, from planning every detail to defining the applied structure to the choice of supporting information technologies used. Knowledge can be, at one time or another:

- Product: Knowledge is perceived as product when it is seen as something relatively tangible, manifest. It is the most usual notion of knowledge, evident in the form of concepts, models, and theories. In organizations, knowledge as product exists in the form of documents and archives, in information systems, and as intellectual assets like design, brands, and patents.

- Process: Knowledge is perceived as process when it is seen as something that unfolds while in motion; it captures a progression or development. It is the process of knowing, of understanding, and it is mostly tacit, since the knowledge it represents is constantly moving and changing. It can be made explicit, though, as explanations, or as descriptions of methods and techniques.

Knowledge as process exists in organizational practices and routines, and the world-view and values they represent, manifesting itself in actualized procedures and processes.

- Power: Despite the common saying, i.e. “Knowledge is power”, the notion of knowledge as power is the most unusual of the three; it is perceived as power for its capacity to cause change or action. This is often a point of divergence in the academic literature emerging from Eastern cultures versus Western ones, which usually refer to this notion as “skills and abilities” and give this area a different conceptual treatment.

The Eastern perspective is what’s behind our own strategy as it offers a more unified view of knowledge that better captures the kinetic and potential ‘force’ that lies in the sum of “skills and abilities” within an organization. As such, Knowledge as power is embodied in properly framed causes, reasons, and goals; it is expressed in the organization’s strategy and can be perceived in existing motives and intentions that can be both formulated and realized based on the total available employee skillsets, which varies slightly with each change in personnel. Consequently, the concretization of any strategy or vision and related goals, is, in many ways, deterministically regulated by the skills of dominant and/or influential agents.

Copyright © 2018 Pascal-Denis Lussier, All rights reserved

.